Fast Way to Read Data in Javascript

This blog mail has an interesting inspiration indicate. Concluding week, someone in i of my Slack channels, posted a coding challenge he'd received for a developer position with an insurance technology company.

It piqued my interest as the challenge involved reading through very large files of data from the Federal Elections Committee and displaying back specific data from those files. Since I've not worked much with raw data, and I'1000 always up for a new challenge, I decided to tackle this with Node.js and run into if I could complete the challenge myself, for the fun of it.

Here'due south the iv questions asked, and a link to the data prepare that the program was to parse through.

- Write a programme that will print out the total number of lines in the file.

- Notice that the 8th column contains a person's proper noun. Write a program that loads in this information and creates an array with all proper noun strings. Impress out the 432nd and 43243rd names.

- Notice that the 5th cavalcade contains a course of date. Count how many donations occurred in each month and print out the results.

- Notice that the 8th column contains a person'south name. Create an array with each get-go name. Identify the most common offset proper name in the information and how many times it occurs.

Link to the information: https://www.fec.gov/files/bulk-downloads/2018/indiv18.aught

When yous unzip the folder, y'all should see one principal .txt file that'due south two.55GB and a folder containing smaller pieces of that main file (which is what I used while testing my solutions earlier moving to the main file).

Not too terrible, right? Seems achievable. So let'due south talk about how I approached this.

The Two Original Node.js Solutions I Came Up With

Processing big files is aught new to JavaScript, in fact, in the core functionality of Node.js, there are a number of standard solutions for reading and writing to and from files.

The most straightforward is fs.readFile() wherein, the whole file is read into retentivity and then acted upon once Node has read information technology, and the 2nd option is fs.createReadStream(), which streams the data in (and out) like to other languages like Python and Java.

The Solution I Chose to Run With & Why

Since my solution needed to involve such things as counting the total number of lines and parsing through each line to become donation names and dates, I chose to employ the second method: fs.createReadStream(). Then, I could use the rl.on('line',...) function to get the necessary information from each line of code equally I streamed through the document.

Information technology seemed easier to me, than having to split apart the whole file once information technology was read in and run through the lines that way.

Node.js CreateReadStream() & ReadFile() Code Implementation

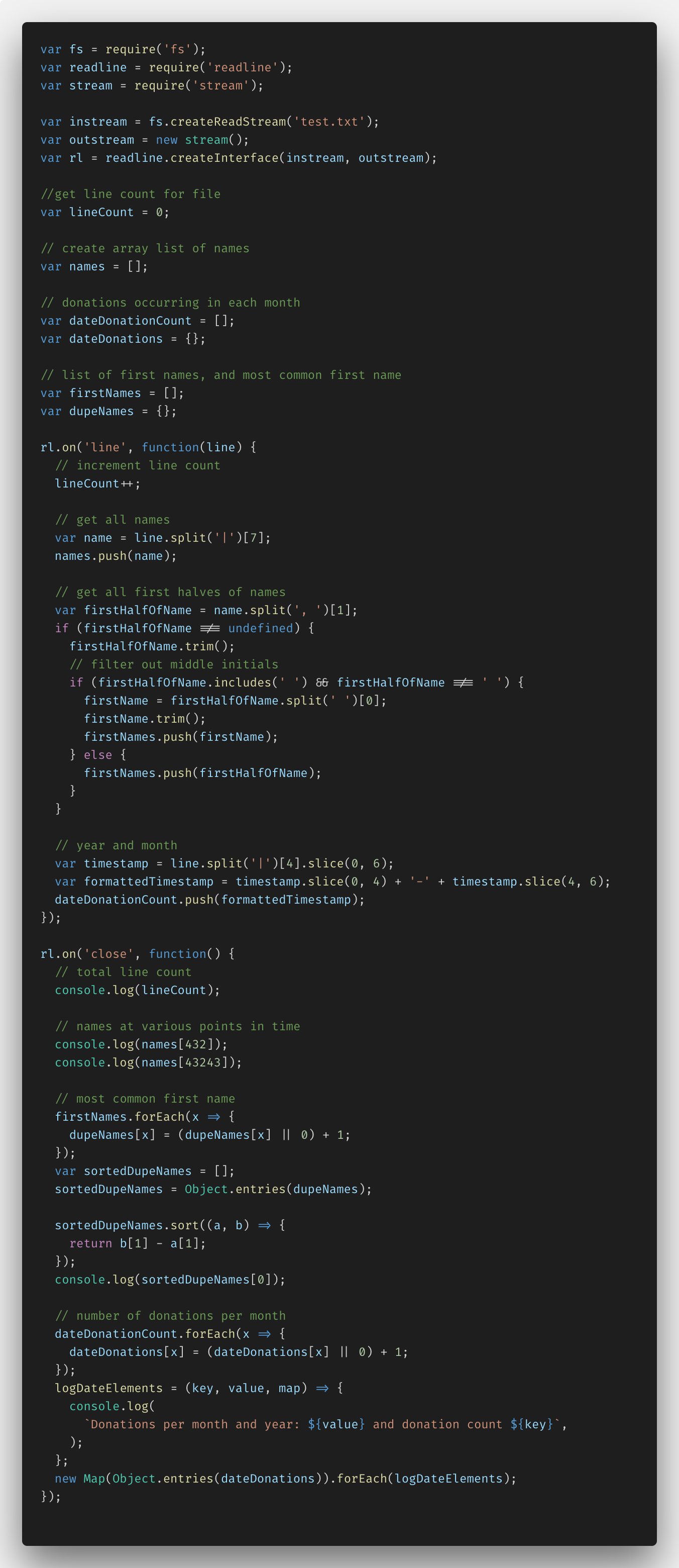

Below is the code I came up with using Node.js'due south fs.createReadStream() role. I'll break it down beneath.

The very first things I had to do to set this upwardly, were import the required functions from Node.js: fs (file arrangement), readline, and stream. These imports allowed me to then create an instream and outstream and and so the readLine.createInterface(), which would let me read through the stream line by line and print out data from it.

I also added some variables (and comments) to agree diverse bits of data: a lineCount, names array, donation array and object, and firstNames assortment and dupeNames object. You'll see where these come up into play a footling later.

Within of the rl.on('line',...) function, I was able to do all of my line-by-line data parsing. In here, I incremented the lineCount variable for each line it streamed through. I used the JavaScript split() method to parse out each proper noun and added information technology to my names assortment. I further reduced each proper name down to simply outset names, while accounting for middle initials, multiple names, etc. forth with the first name with the help of the JavaScript trim(), includes() and dissever() methods. And I sliced the twelvemonth and appointment out of date column, reformatted those to a more readable YYYY-MM format, and added them to the dateDonationCount array.

In the rl.on('close',...) role, I did all the transformations on the data I'd gathered into arrays and panel.logged out all my data for the user to come across.

The lineCount and names at the 432nd and 43,243rd alphabetize, required no further manipulation. Finding the most mutual name and the number of donations for each month was a niggling trickier.

For the nigh common start name, I first had to create an object of key value pairs for each proper noun (the key) and the number of times information technology appeared (the value), so I transformed that into an array of arrays using the ES6 function Object.entries(). From there, it was a uncomplicated task to sort the names by their value and print the largest value.

Donations also required me to make a like object of key value pairs, create a logDateElements() role where I could nicely using ES6'southward string interpolation to display the keys and values for each donation month. And and then create a new Map() transforming the dateDonations object into an array of arrays, and looping through each assortment calling the logDateElements() function on it. Whew! Non quite equally simple as I kickoff thought.

But it worked. At least with the smaller 400MB file I was using for testing…

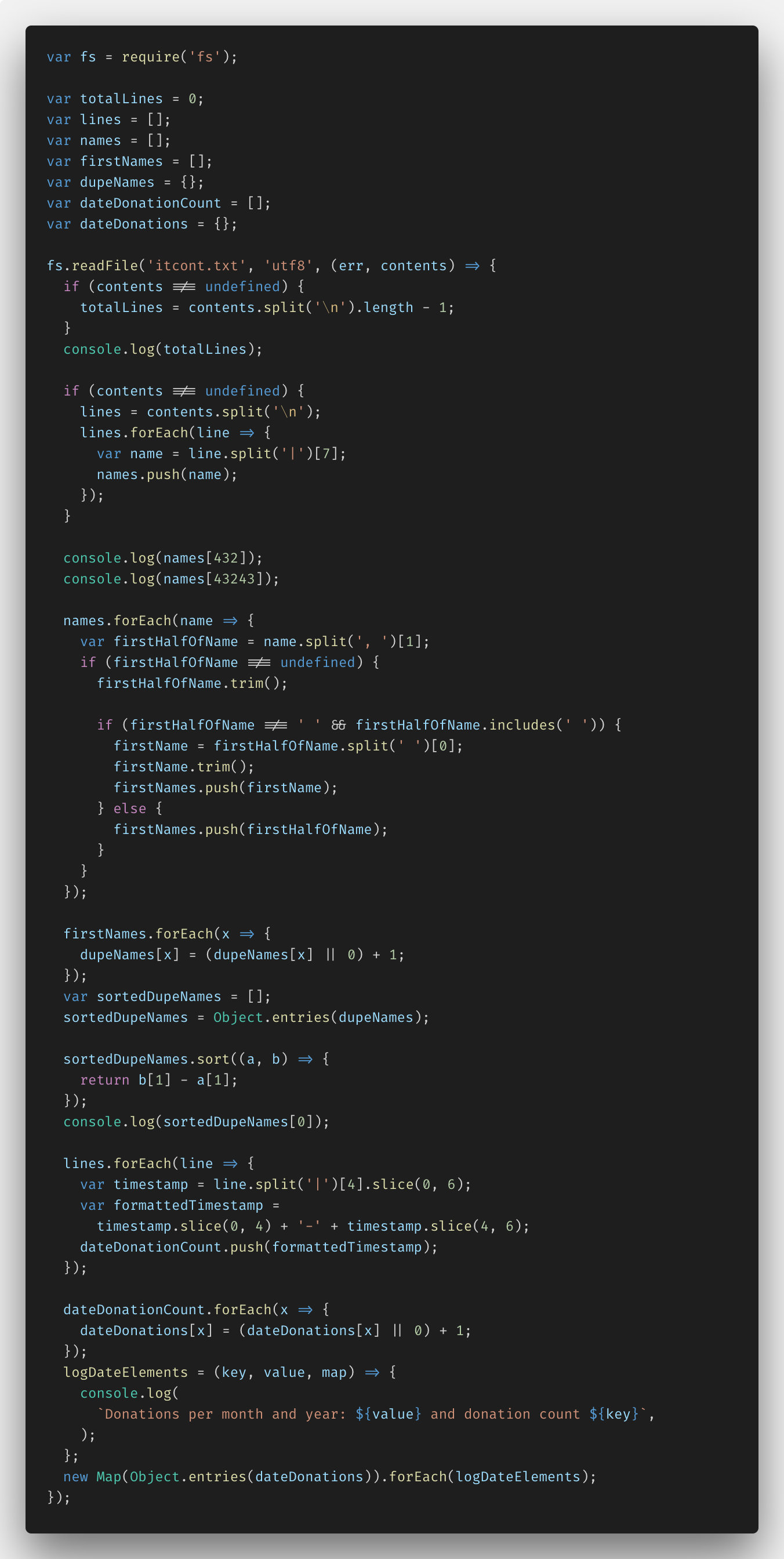

After I'd washed that with fs.createReadStream(), I went back and likewise implemented my solutions with fs.readFile(), to come across the differences. Here'due south the code for that, but I won't go through all the details hither — it's pretty similar to the first snippet, just more synchronous looking (unless you use the fs.readFileSync() function, though, JavaScript will run this code just equally asynchronously as all its other code, non to worry.

If you lot'd like to run across my full repo with all my code, you can see it here.

Initial Results from Node.js

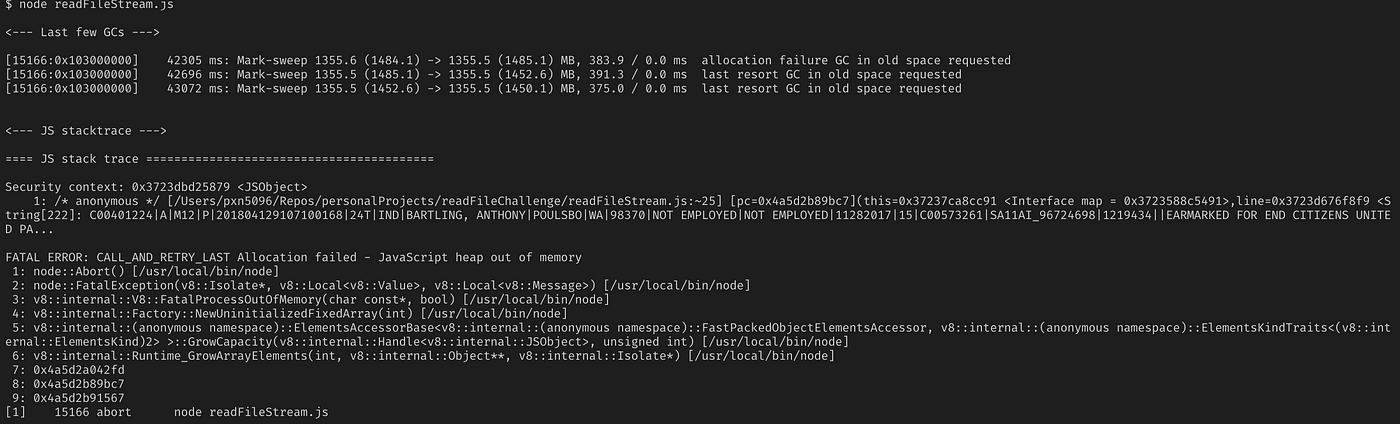

With my working solution, I added the file path into readFileStream.js file for the two.55GB monster file, and watched my Node server crash with a JavaScript heap out of memory fault.

As it turns out, although Node.js is streaming the file input and output, in between information technology is still attempting to hold the entire file contents in retentivity, which it tin't do with a file that size. Node tin can agree up to ane.5GB in memory at one time, only no more.

So neither of my current solutions was up for the full challenge.

I needed a new solution. A solution for fifty-fifty larger datasets running through Node.

The New Data Streaming Solution

I found my solution in the form of EventStream, a pop NPM module with over two million weekly downloads and a promise "to make creating and working with streams easy".

With a little aid from EventStream's documentation, I was able to figure out how to, in one case again, read the code line past line and practise what needed to be washed, hopefully, in a more CPU friendly style to Node.

EventStream Lawmaking Implementation

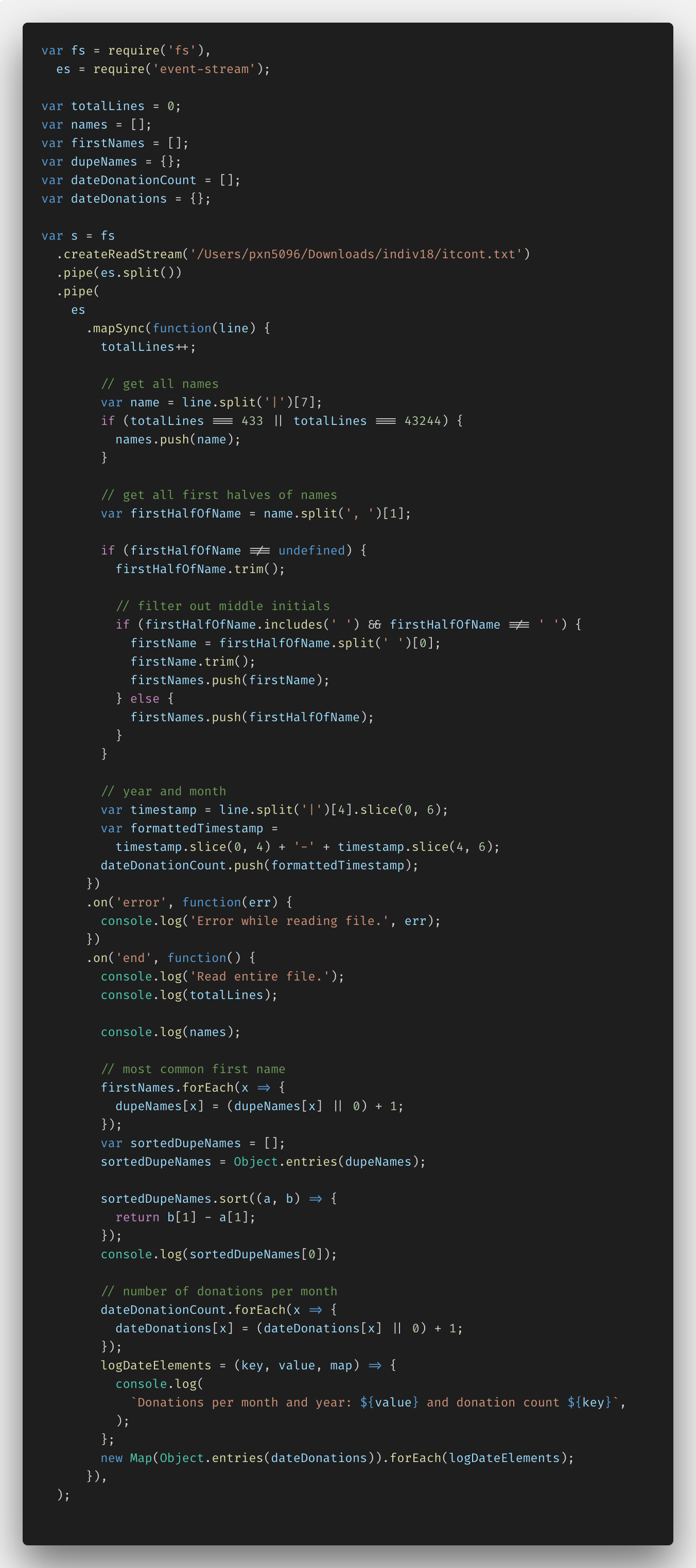

Here's my code new code using the NPM module EventStream.

The biggest change was the pipe commands at the starting time of the file — all of that syntax is the mode EventStream's documentation recommends you suspension up the stream into chunks delimited by the \n graphic symbol at the cease of each line of the .txt file.

The only other thing I had to change was the names answer. I had to fudge that a little bit since if I tried to add all 13MM names into an assortment, I again, hit the out of memory issue. I got around information technology, by just collecting the 432nd and 43,243rd names and calculation them to their own assortment. Not quite what was being asked, but hey, I had to go a piffling creative.

Results from Node.js & EventStream: Round 2

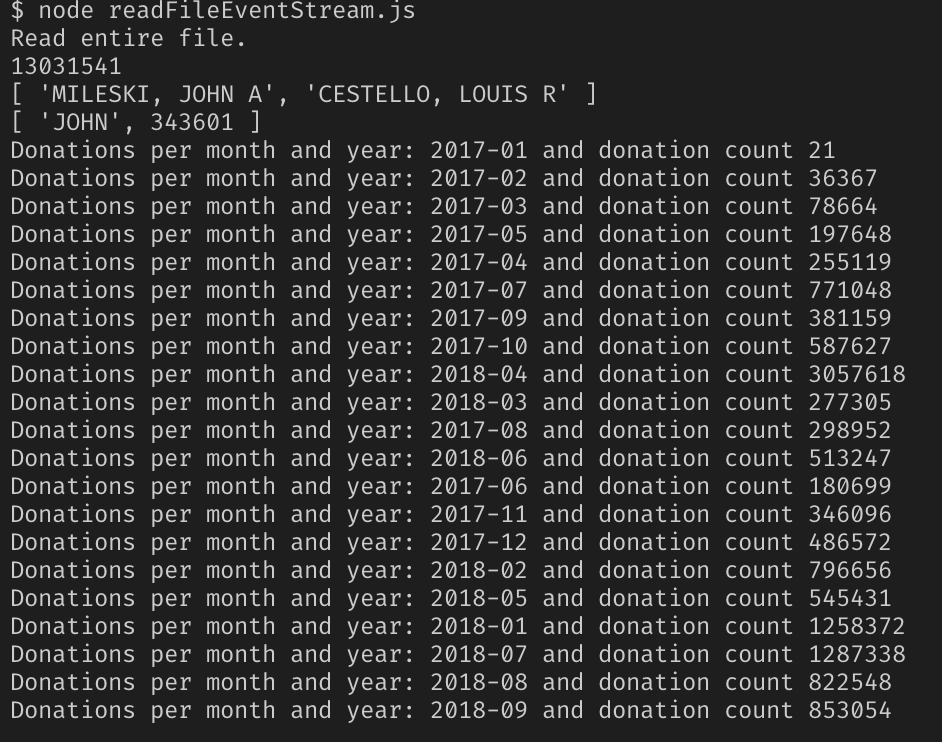

Ok, with the new solution implemented, I once more, fired up Node.js with my 2.55GB file and my fingers crossed this would work. Bank check out the results.

Success!

Conclusion

In the end, Node.js'south pure file and big data treatment functions fell a lilliputian brusque of what I needed, only with just one extra NPM package, EventStream, I was able to parse through a massive dataset without crashing the Node server.

Stay tuned for part ii of this series where I compare my three dissimilar means of reading data in Node.js with functioning testing to see which one is truly superior to the others. The results are pretty eye opening — especially every bit the information gets larger…

Thanks for reading, I hope this gives yous an idea of how to handle big amounts of information with Node.js. Claps and shares are very much appreciated!

If you enjoyed reading this, you may besides savour some of my other blogs:

- Postman vs. Insomnia: Comparing the API Testing Tools

- How to Employ Netflix's Eureka and Spring Cloud for Service Registry

- Jib: Getting Expert Docker Results Without Any Knowledge of Docker

Source: https://itnext.io/using-node-js-to-read-really-really-large-files-pt-1-d2057fe76b33

0 Response to "Fast Way to Read Data in Javascript"

Post a Comment